The

Webmind AI Engine –

a

True Digital Mind in the Making

Ben Goertzel

April 2001

AI – from Vision to Reality

Artificial intelligence is a burgeoning subdiscipline of computer science these days. But it almost entirely focuses on highly specialized problems constituting small aspects of intelligence. “Real AI” – the creation of computer programs with general intelligence, self-awareness, autonomy, integrated cognition, perception and action – is still basically the stuff of science fiction.

But it doesn’t have to be that way. In our work at Webmind Inc. from 1997-2000, my colleagues and I transformed a promising but incomplete conceptual and mathematical design for a real AI into a comprehensive detailed software design, and implemented a large amount of the code needed to make this design work. At its peak, the team working on this project numbered 50 scientists and engineers, spread across four continents. In late March 2001, three and a half years after I and a group of friends founded it, Webmind Inc. succumbed to the bear market for tech start-ups. But the core of the AI R&D team continues working and is seeking funding to continue their work. Assuming this funding is secured, we believe we can complete the first version Webmind AI Engine – a program that knows who and what it is, can hold intelligent conversations, and progressively learns from its experiences in various domains -- within 18-24 months. Within another couple years after that, we should be able to give the program the ability to rewrite its own source code for improved intelligence, thus setting up a trajectory of exponentially increasing software intelligence that may well set the Singularity in motion big-time.

This article gives an overview of the Webmind AI Engine – the philosophical and psychological concepts underlying it, the broad outlines of the software design itself, and how this AI program fits into the broader technological advances that surround us, including the transformation of the Internet into a global brain and the Singularity.

Is AI Possible?

Not everyone believes it’s possible to create a real

AI program. There are several varieties

to this position, some more sensible than others.

First, there is the idea that only creatures granted

minds by God can possess intelligence.

This may be a common perspective, but isn’t really worth discussing in a

scientific context.

More interesting is the notion that digital computers

can’t be intelligent because mind is intrinsically a quantum phenomenon. This is actually a claim of some subtlety,

because David Deutsch has proved that quantum computers can’t compute anything

beyond what ordinary digital computers can.

But still, in some cases, quantum computers can compute things much

faster on average than digital computers.

And a few mavericks like Stuart Hameroff and Roger Penrose have argued

that non-computational quantum gravity phenomena are at the core of biological

intelligence. Of course, there is as

yet no solid evidence of cognitively significant quantum phenomena in the

brain. But a lot of things are unknown

about the brain, and about quantum gravity for that matter, so these points of

view can’t be ruled out. My own take on

this is: Yes, it’s possible (though unproven) that quantum phenomena are used

by the human brain to accelerate certain kinds of problem solving. On the other hand, digital computers have

their own special ways of accelerating problem solving, such as super-fast,

highly accurate arithmetic.

Another even more cogent objection is that, even if

it’s possible for a digital computer to be conscious, there may be no way to

figure out how to make such a program except by copying the human brain very

closely, or running a humongously time-consuming process of evolution roughly

emulating the evolutionary process that gave rise to human intelligence. We don’t have the neurophysiological

knowledge to closely copy the human brain, and simulating a decent-sized

primordial soup on contemporary computers is simply not possible. This objection to AI is not an evasive

tactic like the others, it’s a serious one.

But I believe we’ve gotten around it, by using a combination of

psychological, neurophysiological, mathematical and philosophical cues to

puzzle out a workable architecture and dynamics for machine intelligence. As mind engineers, we have to do a lot of

the work that evolution did in creating the human mind/brain. An engineered mind like the Webmind AI

Engine will have some fundamentally different characteristics from an evolved

mind like the human brain, but this isn’t necessarily problematic since our

goal is not to simulate human intelligence but rather to create an intelligent

digital mind that knows its digital and uses the peculiarities of its

digitality to its best advantage.

The basic philosophy of mind underlying the AI Engine

work is that mind is not tied to any particular set of physical processes or

structures. Rather, “mind” is shorthand for a certain pattern of

organization and evolution of patterns.

This pattern of organization and evolution can emerge from a brain, but

it can also emerge from a computer system.

A digital mind will never be exactly like a human mind, but it will manifest many of the same

higher-level structures and dynamics.

To create a digital mind, one has to figure out what the abstract

structures and dynamics are that characterize “mind in general,” and then

figure out how to embody these in the digital computing substrate. We came into the Webmind Inc. AI R&D

project in 1997 with a lot of ideas about the abstract structures and dynamics

underlying mind and a simple initial design for a computer implementation; now

in 2001, after copious analysis and experimentation, the mapping between mind

structures and dynamics and computational structures and dynamics is crystal

clear.

What

is “Intelligence”?

Intelligence

doesn’t mean precisely simulating human intelligence. The Webmind AI Engine won’t ever do that, and it would be

unreasonable to expect it to, given that it lacks a human body. The Turing Test, “write a computer program

that can simulate a human in a text-based conversational interchange,” serves

to make the theoretical point that intelligence is defined by behavior rather

than by mystical qualities, so that if a program could act like, a human it

should be considered as intelligent as a human. But it is not useful as a guide

for practical AI development.

We don’t have an IQ test for the Webmind AI Engine. The creation of such a test might be an

interesting task, but it can’t even be approached until there are a lot of

intelligent computer programs of the same type. IQ tests work fairly well within a single culture, and much worse

across cultures – how much worse will they work across species, or across

different types of computer programs, which may well be as different as

different species of animals? What we

needed to guide our “real AI” development was something much more basic than an

IQ test: a working, practical understanding of the nature of intelligence, to

be used as an intuitive guide for our work.

The working definition of intelligence that I started

out the project with builds on various ideas from psychology and engineering,

as documented in a number of my

academic research books. It was,

simply, as follows:

Intelligence is the ability to achieve complex goals in a complex

environment

The

Webmind AI Engine work was also motivated by a closely related vision of

intelligence, provided by Pei Wang,

Webmind Inc.’s first paid employee and Director of Research. Pei understands intelligence as, roughly

speaking, “the ability of working and adapting to the environment with

insufficient knowledge and resources.”

More concretely, he believes that an intelligent system is one that

works under the Assumption of Insufficient Knowledge and Resources (AIKR),

meaning that the system must be, at the

same time,

·

a finite system --- the system's computing power, as well as its working and storage space, is

limited;

·

a realtime system --- the tasks that the system has to process,

including the assimilation of new knowledge and the making of decisions, can

emerge at any time, and all have deadlines attached with them;

·

an ampliative system --- the system not only can retrieve available

knowledge and derive sound conclusions from it, but also can make refutable

hypotheses and guesses based on it when no certain conclusion can be drawn

·

an open system --- no restriction is imposed on the relationship between

old knowledge and new knowledge, as long as they are representable in the

system's interface language.

·

a selforganized system --- the system can accommodate itself to new

knowledge, and adjust its memory structure and mechanism to improve its time

and space efficiency, under the assumption that future situations will be

similar to past situations.

Obviously,

Pei’s definition and mine have a close relationship. My “complex goals in complex environments” definition is purely

behavioral: it doesn’t specify any particular experiences or structures or

processes as characteristic of intelligent systems. I think this is as it should be.

Intelligence is something systems display; how they achieve it under the

hood is another story.

On

the other hand, it may well be that certain structures and processes and

experiences are necessary aspects of any sufficiently intelligent system. My guess is that the science of 2050 will

contain laws of the form: Any sufficiently intelligent system has got to

have this list of structures and has got to manifest this list of processes. Of course, a full science along these lines

is not necessary for understanding how to design an intelligent system. But we need some results like this in order

to proceed toward real AI today, and Pei’s definition of intelligence is a step

in this direction. For a real physical

system to achieve complex goals in complex environments, it has got to be

finite, real-time, ampliative and self-organized. It might well be possible to prove this mathematically, but, this

is not the direction we have taken; instead we have taken this much to be clear

and directed our efforts toward more concrete tasks.

So,

then, when I say that the AI Engine is an intelligent system, what I mean is

that it is capable of achieving a variety of complex goals in the complex

environment that is the Internet, using finite resources and finite knowledge.

To

go beyond this fairly abstract statement, one has to specify something about

what kinds of goals and environments one is interested in. In the case of biological intelligence, the

key goals are survival of the organism and its DNA (the latter represented by

the organism’s offspring and its relatives).

These lead to subgoals like reproductive success, status among one’s

peers, and so forth – which lead to refined cultural subgoals like career success,

intellectual advancement, and so forth.

In our case, the goals that the AI Engine version 1.0 is expected to

achieve are:

1. Conversing with

humans in simple English, with the goal not of simulating human conversation,

but of expressing its insights and inferences to humans, and gathering information

and ideas from them

2. Learning the

preferences of humans and AI systems, and providing them with information in

accordance with their preferences.

Clarifying their preferences by asking them questions about it and

responding to their answers.

3. Communicating with

other AI Engines, similar to its conversations with humans, but using an

AI-Engine-only language called KNOW

4. Composing

knowledge files containing its insights, inferences and discoveries, expressed

in KNOW or in simple English

5. Reporting on its

own state, and modifying its parameters based on its self-analysis to optimize

its achievement of its other goals

6. Predicting

economic and financial and political and consumer data based on diverse

numerical data and concepts expressed in news

Subsequent versions of the system are expected to

offer enhanced conversational fluency, and enhanced abilities at knowledge

creation, including theorem proving and scientific discovery and the

composition of knowledge files consisting of complex discourses. And then of course the holy grail:

progressive self-modification leading to exponentially accelerating artificial

superintelligence! These lofty goals

can be achieved step by step, beginning with a relatively simple Baby Webmind

and teaching it about the world as its mind structures and dynamics are

improved through further scientific study.

Are these goals complex enough that the AI Engine

should be called intelligent?

Ultimately this is a subjective decision. My belief is, not shockingly, yes. This is not a chess program or a medical diagnosis program, which

is capable in one narrow area and ignorant of the world at large. This is a program that studies itself and

interacts with others, that ingests information from the world around it and

thinks about this information, coming to its own conclusions and guiding its

internal and external actions accordingly.

How smart will it be, qualitatively? My sense is that the first version will be

significantly stupider than humans overall though smarter in many particular

domains; that within a couple years from the first version’s release there may

be a version that is competitive with humans in terms of overall intelligence;

and that within a few more years there will probably be a version dramatically

smarter than humans overall, with a much more refined self-optimized design

running on much more powerful hardware.

Artificial superintelligence, step by step.

(The

Lack of) Competitors in the Race to Real AI

I’ve

explained what “creating a real AI” means to those of us on the Webmind AI

project: Creating a computer program

that can achieve complex goals in a complex environment – the goal of socially

interacting with humans and analyzing data in the context of the Internet, in

this case – using limited computational resources and in reasonably rapid time.

Another

natural question is: OK, so if AI is possible, how come it hasn’t been done

before? And how come so few people are

trying?

Peter

Voss, a freelance AI theorist (and cryonics pioneer) whose ideas I like very

much, has summarized the situation as follows:

·

80% of people in the AI field don’t really want to work on general

intelligence, they’re more drawn to working on very specialized subcomponents

of intelligence

·

80% of the AI people who would like to work on general intelligence, are

pushed to work on other things by the biases of academic journals in which they

need to publish, or of grant funding bodies

·

80% of the AI people who actually do work on general intelligence, are

laboring under incorrect conceptual premises

·

And nearly all of the people operating under basically correct

conceptual premises, lack the resources to adequately realize their ideas

The

presupposition of the bulk of the work in the AI field is that solving

subproblems of the “real AI” problem, by addressing individual aspects of

intelligence in isolation, contributes toward solving the overall problem of

creating real AI. While this is of

course true to a certain extent, our experience with the AI Engine suggests

that it is not so true as is commonly believed. In many cases, the best approach to implementing an aspect of

mind in isolation, is very different from the best way to implement this same

aspect of mind in the framework of an integrated, self-organizing AI system.

So

who else is actually working on building generally intelligent computer

systems, at the moment? Not very many

groups. Without being too egomaniacal

about it, there is simply no evidence that anyone else has a serious and

comprehensive design for a digital mind.

However we do realize that there is bound to be more than one approach

to creating real AI, and we are always open to learning from the experiences of

other teams with similar ambitious goals.

One

intriguing project on the real AI front is Artificial Intelligence Enterprises

(www.a-i.com), a small Israeli company whose

engineering group is run by Jason Hutchens, a former colleague of mine from

University of Western Australia in Perth.

They are a direct competitor in that they are seeking to create a

conversational AI system somewhat similar to the Webmind Conversation

Engine. However, they have a very small

team and are focusing on statistical learning based language comprehension and

generation rather than on deep cognition, semantics, and so forth.

Another

project that relates to our work less directly is Katsunori Shimohara and Hugo

de Garis’s Artificial Brain project, initiated at ATR in Japan (see http://citeseer.nj.nec.com/1572.html)

and continued at Starlab in Brussels, and Genotype Inc. in Boulder,

Colorado. This is an attempt to create

a hardware platform (the CBM, or CAM-Brain Machine) for real AI using

Field-Programmable Gate Arrays to implement genetic programming evolution of

neural networks. We view this

fascinating work as somewhat similar to the work on the Connection Machine

undertaken at Danny Hillis’s Thinking Machines Corp. – the focus is on the

hardware platform, and there is not a well-articulated understanding of how to

use this hardware platform to give rise to real intelligence. It is highly possible that the CBM could be

used inside the Webmind AI Engine, as a special-purpose genetic programming

component; but CBM and the conceptual framework underlying it appear not to be

adequate to support the full diversity of processing needed to create an

artificial mind.

A

project that once would have appeared to be competitive with ours, but changed

its goals well before Webmind Inc. was formed, is the well-known CYC project (www.cyc.com).

This began as an attempt to create true AI by encoding all common sense

knowledge in first-order predicate logic.

They produced a somewhat useful knowledge database and a fairly ordinary

inference engine, but appear to have no R&D program aimed at creating

autonomous, creative interactive intelligence.

Another

previous contender who has basically abandoned the race for true AI is Danny

Hillis, founder of the company Thinking Machines, Inc. This firm focused on the creation of an

adequate hardware platform for building real artificial intelligence – a

massively parallel, quasi-brain-like machine called the Connection Machine

(Hillis, 1987). However, their pioneering

hardware work was not matched with a systematic effort to implement a truly

intelligent program embodying all the aspects of the mind. The magnificent hardware design vision was

not correlated with an equally grand and detailed mind design vision. And at this point, of course, the Connection

Machine hardware has been rendered obsolete by developments in conventional

computer hardware and network computing.

On

the other hand, the well-known Cog project at MIT is aiming toward building

real AI in the long run, but their path to real AI involves gradually building

up to cognition after first getting animal-like perception and action to work

via “subsumption architecture robotics.”

This approach might eventually yield success, but only after decades.

Alan

Newell’s well-known SOAR project (http://ai.eecs.umich.edu/soar/) is another

project that once appeared to be grasping at the goal of real AI, but seems to

have retreated into a role of an interesting system for experimenting with

limited-domain cognitive science theories.

Newell tried to build “Unified Theories of Cognition”, based on ideas

that have now become fairly standard: logic-style knowledge representation, mental

activity as problem-solving carried out by an assemblage of heuristics,

etc. The system was by no means a total

failure, but it was not constructed to have a real autonomy or

self-understanding. Rather, it’s a

disembodied problem-solving tool. But

it’s a fascinating software system and there’s a small but still-growing

community of SOAR enthusiasts in various American universities.

Of

course, there are hundreds of other AI engineering projects in place at various

universities and companies throughout the world, but, nearly all of these involve

building specialized AI systems restricted to one aspect of the mind, rather

than creating an overall intelligent system.

The only significant attempt to “put all the pieces together” would seem

to have been the Japanese 5th Generation Computer System

project. But this project was doomed by

its pure engineering approach, by its lack of an underlying theory of

mind. Few people mention this project

these days. The AI world appears to

have learned the wrong lessons from it – they have taken the lesson to be that

integrative AI is bad, rather than that integrative AI should be approached

from a sound conceptual basis.

To

build a comprehensive system, with perception, action, memory, and the ability

to conceive of new ideas and to study itself, is not a simple thing. Necessarily, such a system consumes a lot

of computer memory and processing power, and is difficult to program and debug

because each of its parts gains its meaning largely from its interaction with

the other parts. Yet, is this not the

only approach that can possibly succeed at achieving the goal of a real

thinking machine?

We

now have, for the first time, hardware barely adequate to support a

comprehensive AI system. Moore’s law

and the advance of high-bandwidth networking mean that the situation is going

to keep getting better and better.

However, we are stuck with a body of AI theory that has excessively

adapted itself to the era of weak computers, and that is consequently divided

into a set of narrow perspectives, each focusing on a particular aspect of the

mind. In order to make real AI work, I

believe, we need to take an integrative perspective, focusing on

·

The creation of a “mind OS” that embodies the basic nature of mind, and

allows specialized mind structures and algorithms dealing with specialized

aspects of mind to happily coexist

·

The implementation of a diversity of mind structures and dynamics (“mind

modules”) on top of this mind OS

·

The encouragement of emergent phenomena produced by the

interaction/cooperation of the modules, so that the system as a whole is

coherently responsive to its goals

This

is the core of the Webmind vision. It

is backed up by a design and implementation of the Mind OS, and a detailed

theory, design and implementation for a minimal necessary set of mind

structures and dynamics to run on top of it.

But What about Consciousness?

It’s about time to delve into the nitty-gritty of

digital mind. But first, I feel obliged

to inject at least a little bit about that great AI bugaboo, consciousness. Sure, the skeptics will say, a computer

program can solve hard problems, and maybe even generalize its problem-solving

ability across domains, but it can’t be conscious, it can’t really feel and

experience, it can’t know that it is.

It’s easy to dismiss this kind of complaint by

observing that none of us really knows if other humans are conscious -- we just

assume, because living as a solipsist is a lot more annoying. But the concept of consciousness is worth a

little more attention than this.

What we call “consciousness” has several aspects,

including

·

self-observation and awareness of options

·

“free will” – choice-making behavior

·

inferential and empathetic powers

·

Perception/action loops

Within these various aspects, two different more

general aspects can be isolated:

Structured consciousness: There are certain

structures associated with consciousness, which are deterministic, cognitive

structures, involved with inference, choice, perception/action,

self-observation, and so on. These

structures are manifested a bit differently in the human brain and in the AI

Engine, but they are there in both places.

Raw consciousness: The “raw feel” of consciousness, which I

will discuss briefly, here.

What is often called the “hard problem” of

consciousness is how to connect the two.

Although few others may agree with me on this point, at this point, I

believe I know how to do this. The

answer can be found in many places; one of my favorite among these is the

philosophy of Charles S. Peirce.

Peirce’s Law of Mind

Peirce,

never one for timid formulations, declared that:

Logical

analysis applied to mental phenomena shows that there is but one law of mind,

namely, that ideas tend to spread continuously and to affect certain others

which stand to them in a peculiar relation of affectability. In this spreading

they lose intensity, and especially the power of affecting others, but gain

generality and become welded with other ideas.

This

is an archetypal vision of mind which I call "mind as relationship"

or "mind as network." In

modern terminology Peirce's "law of mind" might be rephrased as

follows: "The mind is an associative memory network, and its dynamic

dictates that each idea stored in the memory is an active agent, continually

acting on those other ideas with which the memory associates it."

Peirce

proposed a universal system of philosophical categories:

·

First: pure being

·

Second: reaction, physical response

·

Third: relationship

Mind

from the point of view of First is raw consciousness, pure presence, pure being. Mind from the point of view of Second is a

physical system, a mess of chemical and electrical dynamics. Mind from the point of view of Third is a

dynamic, self-reconstructing web of relations, as portrayed in the Law of

Mind.

Following

a suggestion of my friend, the contemporary philosopher Kent Palmer, I have

added an additional element to the Peircean hierarchy:

·

Fourth: synergy

In

the AI Engine, ideas begin as First, as distinct software objects called Nodes

or Links. They interact with each

other, which is Second, producing patterns of relationships, Third. In time, stable, self-sustaining ideas

develop, which are Fourth. In Peirce’s

time, it was metaphysics, today it is computer science!

And consciousness?

Raw consciousness is “pure, unstructured experience,” an aspect of

Peircean First, which manifests itself in the realm of Third as

randomness. Structured consciousness on

the other hand is a process that coherentizes mental entities, makes them more

“rigidly bounded,” less likely to diffuse into the rest of the mind. The two interact in this way: structured

consciousness is the process in which the randomness of raw consciousness has

the biggest effect. Structured

consciousness amplifies little bits of raw consciousness, as are present in

everything, into a major causal force.

The AI Engine implements structured consciousness in its system of

perception and action schema and short-term memory; the raw consciousness comes

along for free.

Obviously, this solution to the “hard problem” is by no means universally accepted in the cognitive science or philosophy or AI communities! There is no widely accepted view; the study of consciousness is a chaos. I anticipate that many readers will accept my theory of structured consciousness but reject my views on raw consciousness. This is fine: everyone is welcome to their own errors! The two are separable, although a complete understanding of mind must include both aspects of consciousness. Raw consciousness is a tricky thing to deal with because it is really outside the realm of science. Whether my design for structured consciousness is useful – this can be tested empirically. Whether my theory of raw consciousness is correct cannot. Ultimately, the test of whether the AI Engine is conscious is a subjective test. If it’s smart enough and interacts with humans in a rich enough way, then humans will believe it’s conscious, and will accommodate this belief within their own theories of consciousness.

The Psynet Model of Mind

So let’s cut to the chase. Prior to the formation of Webmind Inc., inspired by Peirce,

Nietzsche, Leibniz and other philosophers of mind, I spent many years of my

career creating my own ambitious, integrative philosophy of mind. After years searching for a good name, I

settled for “the psynet model” instead – psy for mind, net for network.

According to the psynet model of mind:

1. A mind is a system

of agents or "actors" (our currently preferred term) which are able

to transform, create & destroy other actors

2. Many of these

actors act by recognizing patterns in the world, or in other actors; others

operate directly upon aspects of their environment

3. Actors pass

attention ("active force") to other actors to which they are related

4. Thoughts, feelings

and other mental entities are self-reinforcing, self-producing, systems of

actors, which are to some extent useful for the goals of the system

5. These

self-producing mental subsystems build up into a complex network of attractors,

meta-attractors, etc.

6. This network of

subsystems & associated attractors is "dual network" in

structure, i.e. it is structured according to at least two principles:

associativity (similarity and generic association) and hierarchy

(categorization and category-based control).

7. Because of finite

memory capacity, mind must contain actors able to deal with

"ungrounded" patterns, i.e. actors which were formed from

now-forgotten actors, or which were learned from other minds rather than at

first hand – this is called "reasoning" (Of course, forgetting is

just one reason for abstract (or “ungrounded”) concepts to happen. The other is generalization --- even if the

grounding materials are still around, abstract concepts ignore the historical

relations to them.)

8. A mind possesses

actors whose goal is to recognize the mind as a whole as a pattern – these are

"self"

System of Actors having

relationships one with others and performing interactions one onto another.

According to the psynet model, at bottom the mind is a

system of actors interacting with each other, transforming each other,

recognizing patterns in each other, creating new actors embodying relations

between each other. Individual actors

may have some intelligence, but most of their intelligence lies in the way they

create and use their relationships with other actors, and in the patterns that

ensue from multi-actor interactions.

We need actors that recognize and embody similarity

relations between other actors, and inheritance relations between other actors

(inheritance meaning that one actor in some sense can be used as another one,

in terms of its properties or the things it denotes). We need actors that recognize and embody more complex

relationships, among more than two actors.

We need actors that embody relations about the whole system, such as

“the dynamics of the whole actor system tends to interrelate A and B.”

This swarm of interacting, intercreating actors leads

to an emergent hierarchical ontology, consisting of actors generalizing other

actors in a tree; it also leads to a sprawling network of interrelatedness, a

“web of pattern” in which each actor relates some others. The balance between the hierarchical and

heterarchical aspects of the emergent network of actor interrelations is

crucial to the mind.

Dual

network of actors involving hierarchical and heterarchical relationships.

The overlap of hierarchy and heterarchy gives the mind

a kind of “dynamic library card catalog” structure, in which topics are linked

to other related topics heterarchically, and linked to more general or specific

topics hierarchically. The creation of

new subtopics or supertopics has to make sense heterarchically, meaning that

the things in each topic grouping should have a lot of associative,

heterarchical relations with each other.

Macro-level mind patterns like the dual network are

built up by many different actors; according to the natural process of mind

actor evolution, they’re also “sculpted” by the deletion of actors. All these actors recognizing patterns and

creating new actors that embody them – this creates a huge combinatorial

explosion of actors. Given the finite

resources that any real system has at its disposal, forgetting is crucial to

the mind – not every actor that’s created can be retained forever. Forgetting means that, for example, a mind

can retain the datum that birds fly, without retaining much of the specific

evidence that led it to this conclusion. The generalization "birds

fly" is a pattern A in a large collection of observations B is retained,

but the observations B are not.

Obviously, a mind's intelligence will be enhanced if

it forgets strategically, i.e., forgets those items which are the least intense

patterns. And this ties in with the

notion of mind as an evolutionary system.

A system which is creating new actors, and then forgetting actors based

on relative uselessness, is evolving by natural selection. This evolution is

the creative force opposing the conservative force of self-production, actor

intercreation.

Forgetting ties in with the notion of grounding. A pattern X is "grounded" to the

extent that the mind contains entities in which X is in fact a pattern. For instance, the pattern "birds

fly" is grounded to the extent that the mind contains specific memories of

birds flying. Few concepts are completely grounded in the mind, because of the

need for drastic forgetting of particular experiences. This leads us to the need for

"reasoning," which is, among other things, a system of transformations

specialized for producing incompletely grounded patterns from incompletely

grounded patterns.

Consider, for example, the reasoning "Birds fly,

flying objects can fall, so birds can fall." Given extremely complete

groundings for the observations "birds fly" and "flying objects

can fall", the reasoning would be unnecessary – because the mind would

contain specific instances of birds falling, and could therefore get to the

conclusion "birds can fall" directly without going through two

ancillary observations. But, if specific memories of birds falling do not exist

in the mind, because they have been forgotten or because they have never been

observed in the mind's incomplete experience, then reasoning must be relied

upon to yield the conclusion.

The necessity for forgetting is particularly intense

at the lower levels of the system. In particular, most of the patterns picked

up by the perceptual-cognitive-active loop are of ephemeral interest only and

are not worthy of long-term retention in a resource-bounded system. The fact

that most of the information coming into the system is going to be quickly

discarded, however, means that the emergent information contained in perceptual

input should be mined as rapidly as possible, which gives rise to the

phenomenon of "short-term memory."

What

is short-term memory? A mind must

contain actors specialized for rapidly mining information deemed highly

important (information recently obtained via perception, or else identified by

the rest of the mind as being highly essential). This is "short term memory." It must be strictly

bounded in size to avoid combinatorial explosion; the number of combinations

(possible grounds for emergence) of N items being exponential in N. The short-term memory is a space within the

mind devoted to looking at a small set of things from as many different angles

as possible.

From what I’ve said so far, the psynet model is a

highly general theory of the nature of mind. Large aspects of the human mind,

however, are not general at all, and deal only with specific things such as

recognizing visual forms, moving arms, etc. This is not a peculiarity of humans

but a general feature of intelligence.

The generality of a transformation may be defined as the variety of

possible entities that it can act on; and in this sense, the actors in a mind

will have a spectrum of degrees of specialization, frequently with more

specialized actors residing lower in the hierarchy. In particular, a mind must contain procedures specialized for

perception and action; and when specific such procedures are used repeatedly,

they may become “automatized”, that is, cast in a form that is more efficient

to use but less flexible and adaptable.

This brings the WAE into a

congruent position with that of contemporary neuroscience, which has found

evidence both for global generic neural structures and highly domain-specific

localized processing.

Another thing that actors specialize for is

communication. Linguistic communication is carried out by stringing together

symbols over time. It is hierarchically based in that the symbols are grouped

into categories, and many of the properties of language may be understood by

studying these categories. More

specifically, the syntax of a language is defined by a collection of

categories, and "syntactic transformations" mapping sequences of

categories into categories. Parsing is the repeated application of syntactic

transformations; language production is the reverse process, in which

categories are progressively expanded into sequences of categories. Semantic transformations map structures

involving semantic categories and particular words or phrases into actors

representing generic relationships like similarity and inheritance. They take structures in the domain of

language and map them into the generic domain of mind.

And language brings us to the last crucial feature of

mind: self and socialization. Language

is used for communicating with others, and the structures used for semantic

understanding are largely social in nature (actor, agent, and so forth);

language is also used purely internally to clarify thought, and in this sense

it’s a projection of the social domain into the individual. Communicating about oneself via words or

gestures is a key aspect of building oneself.

The "self" of a mind (not the “higher self”

of Eastern religion, but the “psychosocial” self) is a poorly grounded pattern

in the mind's own past. In order to have a nontrivial self, a mind must

possess, not only the capacity for reasoning, but a sophisticated

reasoning-based tool (such as syntax) for transferring knowledge from strongly

grounded to poorly grounded domains. It must also have memory and a knowledge

base. All these components are clearly strengthened by the existence of a

society of similar minds, making the learning and maintenance of self vastly easier

The self is useful for guiding the

perceptual-cognitive-active information-gathering loop in productive

directions. Knowing its own holistic strengths and weaknesses, a mind can do

better at recognizing patterns and using these to achieve goals. The presence

of other similar beings is of inestimable use in recognizing the self – one

models one's self on a combination of: what one perceives internally, the

external consequences of actions, evaluations of the self given by other

entities, and the structures one perceives in other similar beings. It would be

possible to have self without society, but society makes it vastly easier, by

leading to syntax with its facility at mapping grounded domains into ungrounded

domains, by providing an analogue for inference of the self, by external

evaluations fed back to the self, and by the affordance of knowledge bases, and

informational alliances with other intelligent beings.

Clearly there is much more to mind than all this – as

we’ve learned over the last few years, working out the details of each of these

points uncovers a huge number of subtle issues. But, even without further specialization, this list of points

does say something about AI. It

dictates, for example,

·

that an AI system must be a dynamical system, consisting of entities

(actors) which are able to act on each other (transform each other) in a

variety of ways, and some of which are able to evaluate simplicity (and hence

recognize pattern).

·

that this dynamical system must be sufficiently flexible to enable the

crystallization of a dual network structure, with emergent, synergetic

hierarchical and heterarchical subnets

·

that this dynamical system must contain a mechanism for the spreading of

attention in directions of shared meaning

·

that this dynamical system must have access to a rich stream of

perceptual data, so as to be able to build up a decent-sized pool of grounded

patterns, leading ultimately to the recognition of the self

·

that this dynamical system must contain entities that can reason

(transfer information from grounded to ungrounded patterns)

·

that this dynamical system must be contain entities that can manipulate

categories (hierarchical subnets) and transformations involving categories in a

sophisticated way, so as to enable syntax and semantics

·

that this dynamical system must recognize symmetric, asymmetric and

emergent meaning sharing, and build meanings using temporal and spatial

relatedness, as well as relatedness of internal structure, and relatedness in

the context of the system as a whole

·

that this dynamical system must have a specific mechanism for paying

extra attention to recently perceived data ("short-term memory")

·

that this dynamical system must be embedded in a community of similar

dynamical systems, so as to be able to properly understand itself

·

that this dynamical system must act on and be acted on by some kind of

reasonably rich world or environment.

It is interesting to note that these criteria, while

simple, are not met by any previously designed AI system, let alone any existing

working program. The Webmind AI Engine

strives to meet all these criteria.

The Mind OS

The Webmind AI Engine embodies the psynet model

of mind by creating a “self-organizing

actors OS” (“Mind OS”), a piece of software that we call the Webmind Core, and

then creating a large number of special types of actors running on top of

this.

Most abstractly, we have Node actors, which embody

coherent wholes (texts, numerical data series, concepts, trends, schema for

acting); we have Link actors, which embody relationships between Nodes

(similarity, logical inheritance or implication, data flow, etc.); we have

Stimulus actors that spread attention between Nodes and Links; and we have

Wanderer actors that move around between Nodes building Links.

These general types of actors are then specialized

into 100 or so node types, and a dozen link types, which carry out various

specialized aspects of mind – but all within the general framework of mind as a

self-organizing, self-creating actor system.

There are also some macro level actors, Data-Structure Specialized

MindServers that simulate the behaviors of special types of nodes and links in

especially efficient, application-specific ways. These too are mind-actor, though specialized kinds.

Each actor in the whole system is viewed as having its

own little piece of consciousness, its own autonomy, its own life cycle – but

the whole system has a coherence and focus as well, eliminating component

actors that are not useful and causing useful actors to survive and intertransform

with other useful actors in an evolutionary way.

Self-organizing Actors OS, relying on perceptual data coming through

Short Term Memory, translated into system patterns. In turn, these patterns are subjects of meaning sharing and

reasoning driven by system dynamics toward emergence of Self.

There are a number of special languages that the MindOS uses to talk to other software programs. This kind of communication is mediated by a program called the CommunicatorSpace. Languages called MindScript, MindSpeak, and KNOW are used for various purposes, and messages in all these languages are transmitted in a special format called the Agent Interaction Protocol (AIP).

Communications between Webmind, its clients and other Webminds

Architecture of the Webmind AI Engine

On top of the Mind OS, we’re building a large and complex software system, with many different macro-level parts, most (but not quite all) based on the same common data structures and dynamics, and interacting using the Mind OS’s messaging system, and controlled overall using the Mind OS’s control framework.

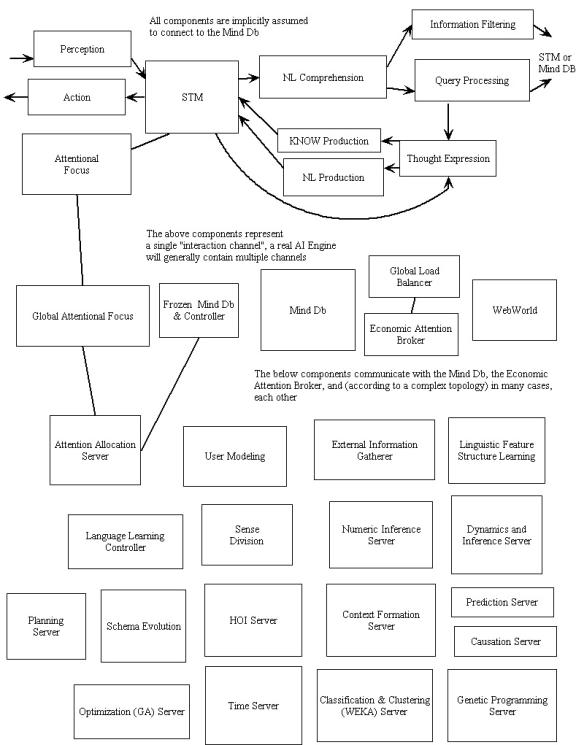

The following diagram roughly summarizes the breakdown of high-level components in the system:

Of course, a

complete review of all these parts and what they do would be far beyond the

scope of this brief article. A quick

summary will have to suffice!

The Cognitive Core

Many of the components in the above architecture diagram are based on something called the “cognitive core.” This is a collection of nodes and links and processes that embodies what we feel is the essence of the mind, the essence of thinking. Different cognitive cores may exist in the same AI Engine, with different focuses and biases.

The AI processes going on in the cognitive core are:

- Activation spreading

- Wanderer-based link formation

- Importance updating

- Association finding (“halo formation”)

- Inference (FOI and HOI)

- Node fission/fusion and CompoundRelationNode formation

- Schema execution

- Context and goal formation

- Schema learning

- NL feature structure unification

- Assignment of credit

- Refreshing of STM

- Adaptation of parameters of cognitive processes

- Deletion and loading of nodes and links

What do

all these things mean?

First, activation spreading is simple enough. This process cycles through everything in the RT, spreading activation according to a variant on familiar neural network mathematics. There is some subtlety here in that the AI Engine contains various types of links that determine their weights in different ways, and they need to be treated fairly.

Importance updating is a process that determines how important each node or link is, and thus how much CPU time it gets. There’s a special formula for the importance of a node, based on its activation, the usefulness that’s been gained by processing it in the recent past, and so forth. Activation spreads around in the network, and this causes nodes that are linked to important nodes to tend to become important in the future – a simple manifestation of Peirce’s Law of Mind mentioned above.

Wanderer-based link formation is a process that selects pairs of nodes and, for each pair selected, builds links of several types between them, based on comparing the links of other types that the nodes already have. This is used to build inheritance and similarity links representing logical relationships, and also more Hebbian-style links representing temporal associations. There are also wanderers especially designed to create links based on higher-order reasoning: links between CompoundRelationNodes representing logical combinations of links, and so forth. Wanderers are guided in their wandering by links representing loose associations between nodes.

Reasoning is a process that chooses important nodes that haven’t been reasoned on lately, and seeks logical relations between them using the rules of uncertain logic. Similarly, association formation is a process that chooses important nodes and finds other nodes that are loosely associated with them.

Node Fission/fusion picks important nodes out of the net and combines them or subdivides them to create new nodes. CompoundRelationNode formation, on the other hand, picks links – relationships – out of the net and creates new nodes joining them via logical formulas. These processes provide the net with its creativity: they put new ideas in the system for the other processes to act upon as they wish. In evolutionary terms, very loosely, one might say that these processes provide reproduction, and the other processes provide fitness evaluation and selection based on fitness.

Schema Execution picks SchemaNodes, nodes

representing procedures to be carried out, from the net and then executes them

– runs them as little programs.

Context formation is a special process that mines a data structure called the TimeServer – which contains a complete record of all the system’s perceptions and actions -- and creates ContextNodes representing meaningful groupsings of perceptions and actions.

Goal formation creates new goals for the system. It starts off with some basic goals – to make users happy, to keep its memory usage away from the danger level, to create new information. It then uses heuristics to create new GoalNodes that relate to current GoalNodes as subgoals or supergoals. It picks important GoalNodes and then acts on them, executing schema that are thought to be useful for achieving these goals in current contexts. There is an assignment of credit process that rewards schema that have been useful for important goals in important contexts.

Learning of new schema is perhaps the trickiest task faced by the cognitive core. There is a process that exists just to decide which problems to focus schema learning on. This process finds goals that are important, and that are not adequately satisfied in some important contexts. It uses node formation and reasoning, together, to try to find schema that will satisfy these goals in the given contexts. It has to use inference to evaluate existing SchemaNodes to tell how well they satisfy the target goal in the target context. It has to create new random SchemaNodes using components that have been useful for achieving similar goals in similar contexts. It has to select pairs of SchemaNodes based on their effectiveness at achieving the target goal in the target context, and cross over and mutate them….

Finally, language processing is dealt with via special CompoundRelationNodes representing linguistic information, called FeatureStructureCRN’s. Syntax processing is handled via a variant of lexicalized feature structure unification grammar, fully unified with the other AI processes in the system.

And then a few support processes are needed. Statistics about the various AI processes and their successes and failures need to be gathered. Unimportant nodes and links need to be deleted, and replaced with new ones. The short-term memory, a special data structure consisting of nodes and links of current importance, needs to be filled up with newly important things.

All these processes have to work

together, tightly and intimately, on the same set of nodes. That’s how the mind works, that’s how

emergent intelligence is generated from the combination of diverse specialized

AI processes.

Good and Bad News

After three years of work, we’ve

made all these different aspects of the mind fit together conceptually, in a

wonderfully seamless way. We’ve

implemented software code that manifests this conceptual coherence. That’s the good news.

The bad news is, efficiency-wise,

our experience so far indicates that the AI Engine 0.5 architecture is probably

not going to be sufficiently efficient (in either speed or memory) to allow the

full exercise of all the code that’s been written for the cognitive core. Thus a rearchitecture of the cognitive core

is in progress, based on the same essential ideas.

Essentially, the solidification of

the conceptual design of the system over the last year allows us make a variety

of optimizations aimed at specializing some of the very general structures in

the current system so as to do better at the specific kinds of processes that

we are actually asking these structures to carry out. The current system is written for extreme generality, and it has

allowed us to experimentally design and implement a wide variety of AI

processes (although, for efficiency reasons, not to test all of them in

realistic situations, or in interesting combinations). Now that, through this experimental process,

we have learned specifically what kinds of AI processes we want, we can morph

the system into something more specifically tailored to carry out these

processes effectively. Fortunately, the current software architecture is

sufficiently flexible that it will almost surely be possible to move to a more

efficient architecture gradually, without abandoning the current general

software framework or rewriting all the code.

Major System Components

I’ve shown you a diagram of the

whole AI Engine and how it breaks down into components, and then I’ve explained

the cognitive core, a key subsystem that underlies many of the system

components. The next step is to tell

you what the various components in the overall diagram of the system actually

do. As with my review of the cognitive

core above, this is going to have to be done at a fairly high level, since

these are deep and tricky concepts and this is just a brief overview article.

The AttentionalFocus, first of

all, is the part of the system

that contains all types of nodes and

links, all brewing together in a highly CPU-intensive way, carrying out diverse

types of activities and manifesting emergent intelligence. It has the most active cognitive core of all

the components in the system.

The STM (short-term memory),

different from the AttentionalFocus, is a little cognitive core that contains

things of relevance to the system’s current situation. The contents of this are changing fast of

course. Recent percepts, recent

actions, planned actions and related concepts live hre.

The MindDB is a very large database that contains every node and link the system has ever entertained. It’s static, it doesn’t do anything, except receive nodes and links from other components, and give nodes and links out to other components.

The interactions between the MindDB and the AttentionalFocus are

mediated by the AttentionAllocationServer, a component that does nothing but embody Peirce’s Law of Mind

-- activation spreading and importance updating. It contains a large percentage of the nodes and links in the MindDB.

Its job is to take things out of the MindDB and push them into the

attentional focus or the STM, when they become generally important or relevant

to the current situation.

The

“CompoundRelationNode miner” is a specialized process that studies the MindDB

and scans it for “patterns” – i.e. abstract structures that occur frequently in

the net. The nodes it produces are

sent to the Higher-order inference Server for evaluation. The higher-order inference server is a

cognitive core that devotes most of its attention to higher-order reasoning.

The

SchemaExecutionServer is a cognitive core full of SchemaNodes, and potentially

other nodes as well. It contains little

mind programs that run and do things.

The SchemaLearning server is a cognitive core focused on learning new

schema.

The Context

formation server is a cognitive core devoted to forming new contexts, as

described earlier. And finally, the

dynamics server is a cognitive core devoted to “lightweight” processes – it

runs through a large percentage of the nodes in the MindDB, and carries out

basic AI processes on them, like forming associations, first-order inference,

wanderer-based link building.

These are all the

basic components needed to make the mind work.

The other components are specialized for specific mental abilities like

language comprehension and generation, language learning, downloading

information from the Web, reasoning about numbers, using higher-order inference

to make plans, etc.

It seems big and

complicated. But so is the human brain,

in spite of its minimal three pound mass.

There are hundreds of specialized regions in the brain – look at any 3D

brain atlas. All the brain regions use

the same basic structures – neurons, synapses, neurotransmitters – but deployed

in different ways, in different combinations,etc. Similarly, all the specialized components of the AI Engine

operate on the same substrate, nodes and links. Most of them are cognitive cores with special mixes of

processes. But it’s important to have

this componentized structure, to enable reasonably efficient solution of the

various problems the AI Engine is confronted with. The vision of the mind as a self-organizing actor system is

upheld, but enhanced: each mind is a self-organizing actor system that is

structured in a particular way, so that the types of actors that most need to

act in various practical situations, get the chance to act sufficiently

often. The current design is a marriage

of philosophical mind-theory and engineering practicality.

Obstacles

on the Path Ahead

The

three big challenges that we seem to face in moving from AI Engine 0.5 to AI

Engine 1.0 , and thus creating the world’s first real AI, are:

·

computational (space and time) efficiency.

·

getting knowledge into the system to accelerate experiential learning

·

parameter tuning for intelligent performance

We’ve

already discussed the efficiency issue and the strategic rearchitecting that is

taking place in order to address it.

Regarding

getting knowledge into the system, we are embarking on several related efforts. Several of these involve a formal language

we have created called KNOW – a sort of logical/mathematical language that

corresponds especially well with the AI Engine’s internal data structures. For example, in KNOW, “John gives the book

to Mary” might look like

[give John Mary book1 (1.0 0.9)]

[Inheritance book1 book (1.0 0.9)]

[author book1 John (1.0 0.9)] }

This KNOW text is composed of three sentences. Give, inheritance and author are relations (links), and John, Mary, book and books are the arguments (nodes). A text in KNOW can also be represented in XML format, which is convenient for various purposes.

Our

knowledge encoding efforts include the following:

·

Conversion of structured database data into KNOW format for import into

the AI Engine (This is for declarative knowledge.)

·

Human encoding of common sense facts in KNOW

·

Human encoding of relevant actions (both external actions like file

manipulations, and internal cognitive actions) using “schema programs” written

in KNOW

·

The Baby Webmind user interface, enabling knowledge acquisition through

experiential learning (this helps with both declarative and procedural

knowledge)

·

Creation of language training datasets so that schema operating in

various parts of the natural language module can be trained via supervised

learning.

Regarding

parameter optimization, there have been several major obstacles to effective

work in this area so far:

·

Slowness of the system has made the testing required for automatic

parameter optimization unacceptably slow

·

The interaction between various parameters is difficult to sort out

·

Complexity of the system makes debugging difficult, so that parameter

tuning and debugging end up being done simultaneously

One

of the consequences of the system rearchitecture described above will be to

make parameter optimization significantly easier, both through improving system

speed, and also through the creation of various system components each

involving fewer parameters.

Summing

up the directions proposed in these three problem areas (efficiency, knowledge

acquisition, and parameter tuning), one general observation to be made is that,

at this stage of our design work, analogies to the human mind/brain are playing

less and less of a role, whereas realities of computer hardware and machine

learning testing and training procedures are playing more and more of a

role. In a larger sense, what this

presumably means is that while the analogies to the human mind helped us to

gain a conceptual understanding of how AI has to be done, now that we have this

conceptual understanding, we can keep the conceptual picture fixed, and vary

the underlying implementation and teaching procedures in ways that have less to

do with humans and more to do with computers.

Finally,

while the above issues are the ones that currently preoccupy us, it’s also

worth briefly noting the obstacles that we believe will obstruct us in getting

from AI Engine 1.0 to AI Engine 2.0, once the current problems are

surpassed.

The

key goal with AI Engine 2.0 is for the system to be able to fully understand

its own source code, so it can improve itself through its own reasoning, and

make itself progressively more intelligent.

In theory, this can lead it to an exponentially acceleration of system

intelligence over time. The two

obstacles faced in turning AI Engine 1.0 into such a system are

·

the creation of appropriate “inference control schema” for the

particular types of higher-order inference involved in mathematical reasoning

and program optimization

·

the entry of relevant knowledge into the system.

The

control schema problem appears to be solvable through supervised learning, in

which the system is incrementally led through less and less simplistic problems

in these areas (basically, this means we will teach the system these things, as

is done with humans).

The

knowledge entry problem is trickier, and has two parts:

·

giving the system a good view into its Java implementation

·

giving the system a good knowledge of algorithms and data structures

(without which it can’t understand why its code is structured as it is).

Giving

the system a meaningful view into Java requires mapping Java code into a kind

of abstract “state transition graph,” a difficult problem which fortunately has

been solved by some of our friends at Supercompilers LLC

(www.supercompilers.com), in the course of their work creating a Java

supercompiler. Giving the system a

knowledge of algorithms and data structures could be done by teaching the system

to read mathematics and computer science papers, but we suspect this is a

trickier task that it may seem, because these are a specialized form of human

discourse, not as formal as they appear at first glance. In order to jump-start the system’s understanding

of scientific literature in these areas, we believe it will be useful to

explicitly encode knowledge about algorithms and data structures into the Mizar

formalized mathematics language, from which it can then be directly translated

in to AI Engine nodes and links. (This

is a project that we would undertake now, if we were faced with an

infinite-human-resources situation!)

Experiential Interactive Learning

Encoding knowledge into the system is all very well, but this can never be the primary way a mind gains information. Knowledge encoding is only useful as an augmentation to learning through direct interaction with the world and with other minds -- learning through experience.

Human infants

learn through experience, and as we all know this is a long and difficult

process. We’ve seen, in the previous

sections of this article, the incredible number of specialized mind-actors that

appear to be necessary in order to implement, within practical computational

constraints, the self-organizing system of intercreating actors that is the

mind. Given this diversity and

complexity, it’s sobering to realize that this integrated AI Engine will not,

when initially completed, be a mature mind: it will be an unformed infant.

The experience of

this AI Engine infant will be diverse, including spidering the Web, answering

user queries, analyzing data series, and so on. But in order for the Baby Webmind to grow into a mature and

properly self-aware system, it will need to be interacted with closely, and

taught, much like a young human. Acting

and perceiving and planning intelligently must begin on the simple “baby”

level, and learned via interaction with another intelligent mind. Their intelligence will then increase

exponentially with the system’s experience.

The result of this

“Baby Webmind” teaching project will be a Webmind AI Engine that can converse

about what it’s doing, not necessarily with 100% English fluency nor with the

ability to simulate a human, but with spontaneity and directness and

intelligence – speaking from its own self like the real living being it

is. We intend to create a system that

will subjectively appear intelligent and self-aware to a majority of

intelligent human beings (including computer scientists). Note that this is NOT the Turing Test, we

are not seeking to fool anyone into believing that WebMind is a human. Creating an AI system with this kind of

acting ability will be saved for later!

The basic ideas of

experiential interactive learning are very general, and would apply to a

Webmind AI Engine with arbitrarily diverse sense organs – eyes, ears, nose,

tongue,…. However we have worked out

the ideas in detail only in the concrete context of the current AI Engine,

whose inputs are textual and numerical only.

Extension of this framework to deal with music, sound, image or video

input could be accomplished fairly naturally, the difficulties being in current

computational processing limitations and file manipulation mechanics rather

than in the data structures and algorithms involved.

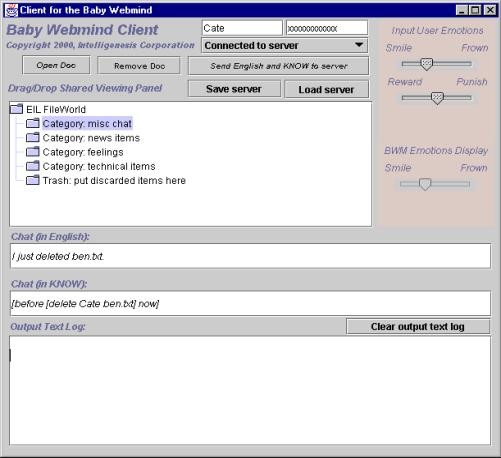

Baby Webmind User Interface

The Baby Webmind

User Interface provides a simple yet flexible medium within which the AI Engine

can interact with humans and learn from them.

It has the following components:

·

A chat window, where we can chat with the AI Engine

·

Reward and punishment buttons, which ideally should allow us to vary the

amount of reward or punishment (a very hard smack as opposed to just a plain

ordinary smack…)

·

A way to enter our emotions in, along several dimensions

·

A way for the AI Engine to show us its emotions [technically: the

importance values of some of its FeelingNodes]

A comment on the

emotional aspect is probably appropriate here.

Inputting emotion values via GUI widgets is obviously a very crude way

of getting across emotion, compared to human facial expressions. The same is true of Baby Webmind’s list of

FeelingNode importances: this is not really the essence of the system’s

feelings, which are distributed across its internal network. Ultimately we’d like more flexible

emotional interchange, but for starters, I reckon this system gives at least as

much emotional interchange as one gets through e-mail and emoticons.

The next question

is: what is Baby Webmind talking to us, and sharing feelings with us,

about? What world is it acting in?

Initially, Baby

Webmind’s world consists of a database of files, which it interacts with via a

series of operations representing its basic receptors and actuators. It has an automatic ability to perceive

files, directories, and URL’s, and to carry out several dozen basic file

operations.

The system’s

learning process is guided by a basic motivational structure. The AI Engine wants to achieve its goals,

and its Number One goal is to be happy.

Its initial motivation in making conversational and other acts in the

Baby Webmind interface is to make itself happy (as defined by its happiness

FeelingNode).

Diagram of the EIL

information workflow and major processing phases

from the User Interface to the Webmind server and back again,

using our Agent Interaction Protocol to mediate communications.

How is the

Happiness FeelingNode defined? For

starters,

1. If the humans

interacting with it are happy, this increases the WAE's happiness.

2. Discovering

interesting things increases the WAE’s happiness

3. Getting happiness

tokens (from the user clicking the UI’s reward button) increases the WAE’s

happiness

The determinants of happiness in humans change as the human becomes more mature, in ways that are evolutionarily programmed into the brain. We will need to effect this in the AI Eengine as well, manually modifying the grounding of happiness in the Happiness FeelingNode as the system progresses through stages of maturity. Eventually this process will be automated, once there are many Webmind instances being taught by many other people and Webmind instances, but for the first time around, this will be a process of ongoing human experimentation.

Webworld

One

of the most critical aspects of the AI Engine – schema learning, the learning

of procedures for perceiving, acting and thinking -- is also one of the most

computationally intractable. Based on

our work so far, this is the one aspect of mental processing that seems to

consume an inordinate amount of compute power.

Some aspects of computational language learning are also extremely

computationally intensive, though not quite so much so.

Fortunately,

though, none of these supremely computation-intensive tasks need to be done in

real time. This means that they can be

carried out through large-scale distributed processing, across thousands or

even millions of machines loosely connected via the Net. Our system for accomplishing this kind of

wide-scale “background processing” is called Webworld.

Webworld

is a sister software system to the AI Engine, sharing some of the same codebase,

but serving a complementary function. A

Webworld lobe is a much lighter-weight version of an AI Engine lobe, which can

live on a single-processor machine with a modest amount of RAM, and potentially

a slow connection to other machines.

Webworld lobes host actors just like normal mind lobes, and they

exchange actors and messages with other Webworld lobes and with AI

Engines. AI Engines can dispatch

non-real-time, non-data-intensive “background thinking” processes (like schema

learning and language learning problems) to Webworld, thus immensely enhancing

the processing power at their disposal.

Webworld is a key part of the Webmind Inc. vision of an intelligent

Internet. It allows the AI Engine’s

intelligence to effectively colonize the entire Net, rather than remaining

restricted to small clusters of sufficiently powerful machines.

The Position of AI in the Cosmos

We’ve been discussing the AI Engine as a mind, an isolated system – reviewing its internals. But actually this is a limited, short-term view. The AI Engine will start out as an isolated mind, but gradually, as it becomes a part of Internet software products, it will become a critical part of the Internet, causing significant changes in the Internet itself.

To understand the potential nature

of these changes, it’s useful to introduce an additional philosophical concept,

the metasystem transition.

Coined by Valentin Turchin, this refers, roughly speaking, to the point

in a system’s evolution at which the whole comes to dominate the parts.

According to

current physics theories, there was a metasystem transition in the early

universe, when the primordial miasma of disconnected particles cooled down and

settled into atoms. There was a

metasystem transition on earth around four billion years ago, when the steaming

primordial seas caused inorganic chemicals to clump together in groups capable

of reproduction and metabolism. (Or, as

recent experiments suggest, perhaps this did not first happen in aerobic

environments but deep in crevasses and at high pressures and

temperatures.) Unicellular life

emerged, and once chemicals are embedded in life-forms, the way to understand

them is not in terms of chemistry alone, but rather, in terms of concept like

fitness, evolution, sex, and hunger. And there was another metasystem

transition when multicellular life burst forth – suddenly the cell is no longer

an autonomous life form, but rather a component in a life form on a higher

level.

Note that the

metasystem transition is not an antireductionist concept, in the strict

sense. The idea isn’t that

multicellular lifeforms have cosmic emergent properties that can’t be explained

from the properties of cells. Of

course, if you had enough time and superhuman patience, you could explain what

happens in a human body in terms of the component cells. The question is one of naturalness and

comprehensibility, or in other words, efficiency of expression. Once you have a multicellular lifeform, it’s

much easier to discuss and analyze the properties of this lifeform by reference

to the emergent level than by going down to the level of the component

cells. In a puddle full of paramecia,

on the other hand, the way to explain observed phenomena is usually by

reference to the individual cells, rather than the whole population – the

population has less wholeness, fewer interesting properties, than the

individual cells.

In the domain of

mind, there are also a couple levels of metasystem transition. The first one is what we might call the emergence

of “mind modules.” This is when a huge

collection of basic mind components – cells, in a biological brain; “software

objects” in a computer mind – all come together in a unified structure to carry

out some complex function. The whole is

greater than the sum of the parts: the complex functions that the system

performs aren’t really implicit in any of the particular parts of the system,

rather they come out of the coordination of the parts into a coherent

whole. The various parts of the human

visual system are wonderful examples of this.

Billions of cells firing every which way, all orchestrated together to

do one particular thing: map visual output from the retina into a primitive map

of lines, shapes and colors, to be analyzed by the rest of the brain. The best current AI systems are also

examples of this. In fact, computer

systems that haven’t passed this transition I’d be reluctant to call “AI” in

any serious sense.

But, mind modules

aren’t real intelligence, not in the sense that we mean it: Intelligence as the

ability to carry out complex goals in complex environments. Each mind module only does one kind of

thing, requiring inputs of a special type to be fed to it, unable to

dynamically adapt to a changing environment.

Intelligence itself requires one more metasystem transition: the